New, censored research: "unexpected" patterns in the global pandemic response data

At a country level, the 2020-2022 public health interventions did not exactly work as intended. Is this why the WHO did not want my analysis published in their Bulletin?

I am pleased to report the publication of one of the first analyses completed as part of the RECOVER19 project introduced in my last post (and yes, that was quite a while ago…). The International Journal of Scholarly Research in Multidisciplinary Studies, an open-access journal based in the Philippines, published my article “Unexpected patterns in the global COVID-19 pandemic data”. In the following paragraphs, I summarize the results, which suggest a widespread failure of the COVID-19 pandemic response, and outline the publication history, which provides yet another example of the blatant censorship of inconvenient research.

For this work, I pursued a simple yet neat (I think!) approach using pre-existing statistics: the daily updates compiled for the Our World In Data (OWID) COVID-19 Data Explorer from sources such as the World Health Organization, the Center for Systems Science and Engineering at Johns Hopkins University, and the Oxford COVID-19 Government Response Tracker (OxCGRT). I distinguished three groups of indicators:

Public health outcomes, including new COVID-19 cases per 100,000 people; COVID patients in intensive care per 100,000 people; total COVID-attributed deaths per million; and excess mortality as a percentage difference of actual to projected deaths.

Government interventions, including testing rates; the government response stringency index from the OxCGRT dataset (which represents several policies aimed at mobility reduction); degree of school closures and scope of face covering policies from the same source; and percentage of the population who were fully vaccinated (as per initial vaccination protocol).

Socio-economic determinants, including share of a country’s population who are 65 years or older; gross domestic product per capita; and the United Nations’ Human Development Index (HDI), which itself is composed of measures for life expectancy, education, and incomes.

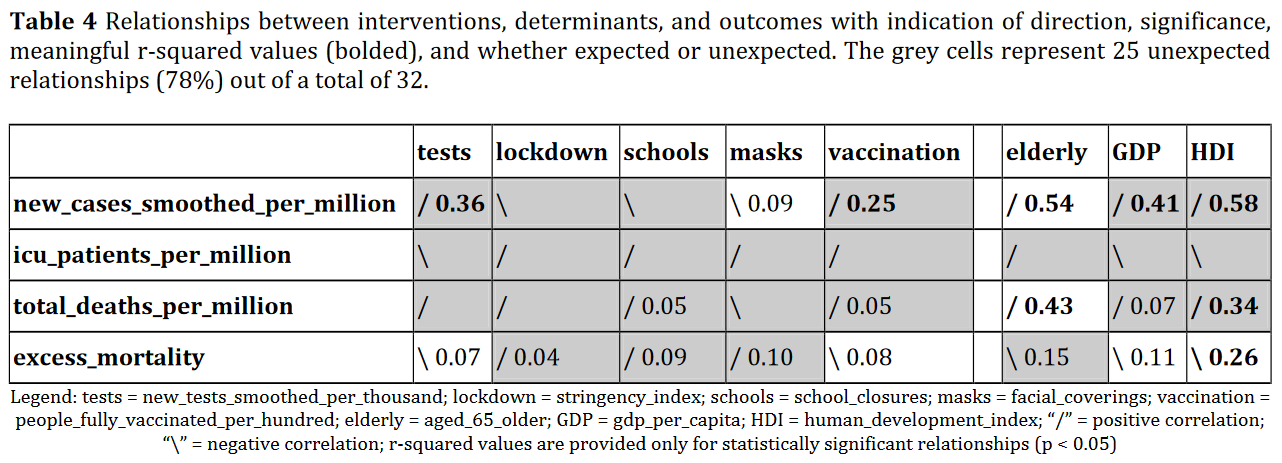

I uploaded these data to a Tableau Public visualization, available here, and interrogated them on the basis of what I have to assume were the expected (beneficial) effects of government interventions and pre-existing socio-economic conditions on the pandemic outcomes. For example, stricter interventions were intended to yield demonstrably better outcomes (fewer cases, hospitalizations, and deaths). But what I found was that between 78% and 92% of the relationships between the above factors were not statistically significant or pointed in the wrong direction altogether!

For example, at a global per-country scale for the years 2020-2022 combined (2021-2022 for vaccination), more testing and more vaccinations were associated at a statistically significant level with more, not fewer, cases. As well, stricter lockdowns, more school closures, and wider-ranging mask mandates all correlated with greater excess mortality (statistically significant although with a low explanatory power) as well as higher hospitalizations (not statistically significant), instead of improved (reduced) outcomes.

A variety of other “unexpected” (or not so unexpected, depending on your position vis-a-vis “the fence”) patterns can be gleaned from the tables and charts in the published article. As an example of a chart, Figure 4 reproduced below, illustrates that countries with greater wealth as measured by the Human Development Index tended to have more, not fewer, COVID-19 deaths across continents and years, with the exception of Europe, where the expected relationship of wealth-to-health holds true (hard to read in the figure but documented in the associated Tables 6 and 7 in the article).

A number of limitations with this research are acknowledged in the article, including the relatively coarse country scale (no distinction of interventions and outcomes within a country); the selection of indicators; questions about variable definitions and data accuracy at the source; the possibility of missing time-delayed effects; and, of course, the fact that statistical correlation does not prove that two factors are in a causal relationship. (Although in this case we are more concerned with the negation of the principle that correlation does not imply causation, and I’d have to think more carefully about whether the lack of correlation actually does prove a lack of causation?)

Either way, as a result of these limitations, I am careful to call for “an unbiased, critical reassessment of the global pandemic response” and more in-depth research, perhaps starting from a position of assuming that none of the measures worked and trying to prove that some did work (good luck!). And with all of this in mind, my conclusions do include the following (compiled from Sections 4 and 5):

For the most part, and contrary to widely communicated expectations, testing, lockdowns, school closures, face masks, and vaccination could not be shown to have a positive effect on COVID-19 cases, hospitalizations, and deaths. The few exceptions, where our initial hypotheses were not rejected, were concentrated in Europe. The largely unexpected patterns in the global COVID-19 data suggest that the pandemic response was not evidence-based and thus must be considered a policy blunder.

Pending more detailed research, preliminary recommendations for future crises include to refrain from most lockdowns and school closures; make face coverings and vaccinations optional; and learn from the way in which poorer developing countries responded to this pandemic.

Now that this article is freshly published, it’s also an opportune moment to review its short yet turbulent history. The idea for the project emerged in parallel to a computer lab assignment developed for my senior elective course on geographic information systems (GIS) and decision support. I wanted to expose my BA in Geographic Analysis students to the OWID data and asked them to create a Tableau dashboard, find some unexpected patterns in the global COVID-19 data, and write up their exploratory analysis as a lab report. My first draft file for the article manuscript dated 5 April 2023 contained my own lists of important indicators and several screenshots of interesting scatterplots, including one showing greater COVID-19 death rates unexpectedly associated with higher HDI values, stricter lockdowns, and more vaccinations. Meanwhile, the students in my course tended to argue along the dominant narrative, even if the graphs they described were showing the opposite!

From 24 April to 5 May, I participated in a 14-day writing challenge organized by the US-based “National Center for Faculty Development & Diversity”, during which I essentially completed the manuscript for the article, expanding it from some 300 words to over 4,000. The challenge provided the motivation to write for several hours every day. Although I did not find it important enough to acknowledge it in the article, I wanted to mention it here since others may benefit from this kind of writing community.

On 14 May, I submitted the completed, formatted text to the journal AIMS Press Public Health. This is a low-cost open-access journals with a US university-based publisher that has published some critical COVID-19 research. However, after answering some additional questions in the morning of 15 May, by the evening of that day I had received an editorial rejection without concrete feedback:

We are writing to inform you that we will not be able to process your paper further. Papers sent for peer-review are selected on the basis of discipline, novelty and general significance, in addition to the usual criteria for publication in scholarly journals. Therefore, our decision is not necessarily a reflection of the quality of your research.

It is possible that the first paragraph of the manuscript was too revealing of my general position. It read something like this: “The widely believed story of COVID-19 is that a novel coronavirus was discovered in late December 2019 in the Chinese city of Wuhan. First called 2019-nCov, later rechristened SARS-CoV-2 [1], the virus was assumed to hit an immunologically naive human population. A highly sensitive test using the PCR technology [2] was developed and used globally in a somewhat haphazard manner [e.g. 3], making it possible to find SARS-CoV-2 wherever doctors or public health officials were looking for it.” This might have been too much to swallow for an unsuspecting editor of a public health journal. What do you think?

With a toned-down first paragraph, I decided to venture into the lion’s den by submitting to the WHO Bulletin on 17 May. After all, John Ioannidis’ offensively low COVID-19 mortality estimates had (eventually) been published by the Bulletin! And indeed, after a screening for “originality, public health relevance, interest for an international audience and suitability for the Bulletin’s general readership”, the manuscript was sent out to at least two reviewers. Alas, on 9 August, I was notified that:

After undergoing this initial assessment, your paper was reviewed by peers and by the Bulletin’s editorial committee, and the consensus opinion of these experts has unfortunately resulted in your paper not being further considered for publication.

The editorial committee concurs with the second reviewer that collinearity needs to be explicitly explored within a wider group of authors who would be able to provide more information on the particular reporting biases in each country and time period.

In light of the above, let me outline the two reviews received. Reviewer 1 provided a copy of the manuscript’s PDF file annotated with only eight concrete recommendations, including a few helpful requests for clarifications and some technical improvements. Reviewer 2 wrote a more typical review, starting with a one-sentence summary and general comment:

This is a nicely done study that, as the abstract concludes, “suggests the need for an unbiased, critical assessment of the global pandemic response….”

My main general comment is that some of the Tables and Figures need some formatting improvement.

This is followed by several specific recommendations for improving the figures and tables, concrete requests to clarify the variable definitions, recommendation of an additional contextual reference, several important but easily implemented clarifications in the methods, and several detailed suggestions for improving the wording of some of the observed results.

In this context, the reviewer wonders, “if ‘more tests’ and ‘more vaccinations’ might be collinear with ‘HDI’ and/or ‘GDP’, such that they may just be reflecting a higher ‘GDP’ of the country. Can you check this possibility?” It appears that the Bulletin’s “editorial committee” used this comment to shut down the submission; testing this (and other) collinearities would have been easily done as the reviewer suggested, but I was not given the opportunity.

Reviewer 2 concluded by lauding the expressed limitations as “well mentioned”, suggested adding the “timing of interventions in relation to outcomes” to the limitations and areas for future research, and ended with what looks like another endorsement than a criticism:

I agree that “the large amount of unexpected relationships suggests that the COVID-19 pandemic response failed”, although I would say “… the large number of unexpected relationships suggests that the COVID-19 pandemic responses largely failed” may be better wording.

I have had only few reviews in my career that were as supportive and easy to respond to as these. But I have also learned that one critical statement from a reviewer of a manuscript or grant proposal is enough for a committee to reject your work, if they are so inclined — potentially for other, more fundamental yet unspeakable reasons.

Although I was quite taken aback by the Bulletin’s rejection, I turned around, implemented most of their reviewers’ recommendations, and submitted to the International Journal of Scholarly Research in Multidisciplinary Studies (IJSRMS), which had just invited me to submit my work. (Edit: The “received” date in the article’s publication history has now been corrected to 19 August 2023.)

Given the utter lack of multidisciplinary perspectives in the COVID-19 response, IJSRMS seemed quite a good fit. It might be called a predatory journal by some, but with an article processing charge of US$ 32, this label seems pretty silly. Their visibility is likely quite limited, so I am relying on other avenues and would like to encourage everyone reading this to share the article and/or interactive visualization widely. Thanks!

I'm sorry but clearly you failed to understand the goal of OPERATION COVIDIUS... The following is official data for Portugal:

https://postimg.cc/Z9VDq1SG

Clearly a success.